Quality 101

I Think I Need AI!

What is AI?

For most manufacturers evaluating AI, their key concerns focus on the cost and complexity of design and deployment.

By Ed Goffin

Manufacturers of all sizes struggle with the cost of poor product quality, whether that translates into slower production, decreased profits, or unnecessary waste. Even worse, poor quality can do irreversible damage to brand reputation. In the food and beverage market, 20 percent of consumers say they will not purchase from a brand following a product recall.

While artificial intelligence (AI) is gaining favor as a solution to quality problems, it brings a number of new, sometimes confusing, terms. As a first step, many manufacturers ask “What is AI?”

Understanding Machine Vision and AI

Machine vision is a mainstay on today’s manufacturing floor, thanks to programmers’ ability to continuously train inspection systems to make automated decisions. To achieve accurate results, a machine vision system includes enough intelligence to compare training data to actual objects.

Machine vision rules-based algorithms excel when there is consistency, whether that’s types of defects or material being inspected. If parameters change, the algorithm needs to be manually updated. Rules-based programming particularly struggles when inspecting variable surfaces, such as glass, metal, or textured material.

The promise of AI is to remove some of the ‘hard-coded’ limitations of traditional programming so inspection systems can be more versatile. This is especially valuable as consumer preferences tend towards customization and organizations are manufacturing short-run products’ different packaging and grading requirements. Another key area for AI is the ability to decrease false-positives and false-negatives generated by rules-based inspection that require costly and time-consuming secondary review.

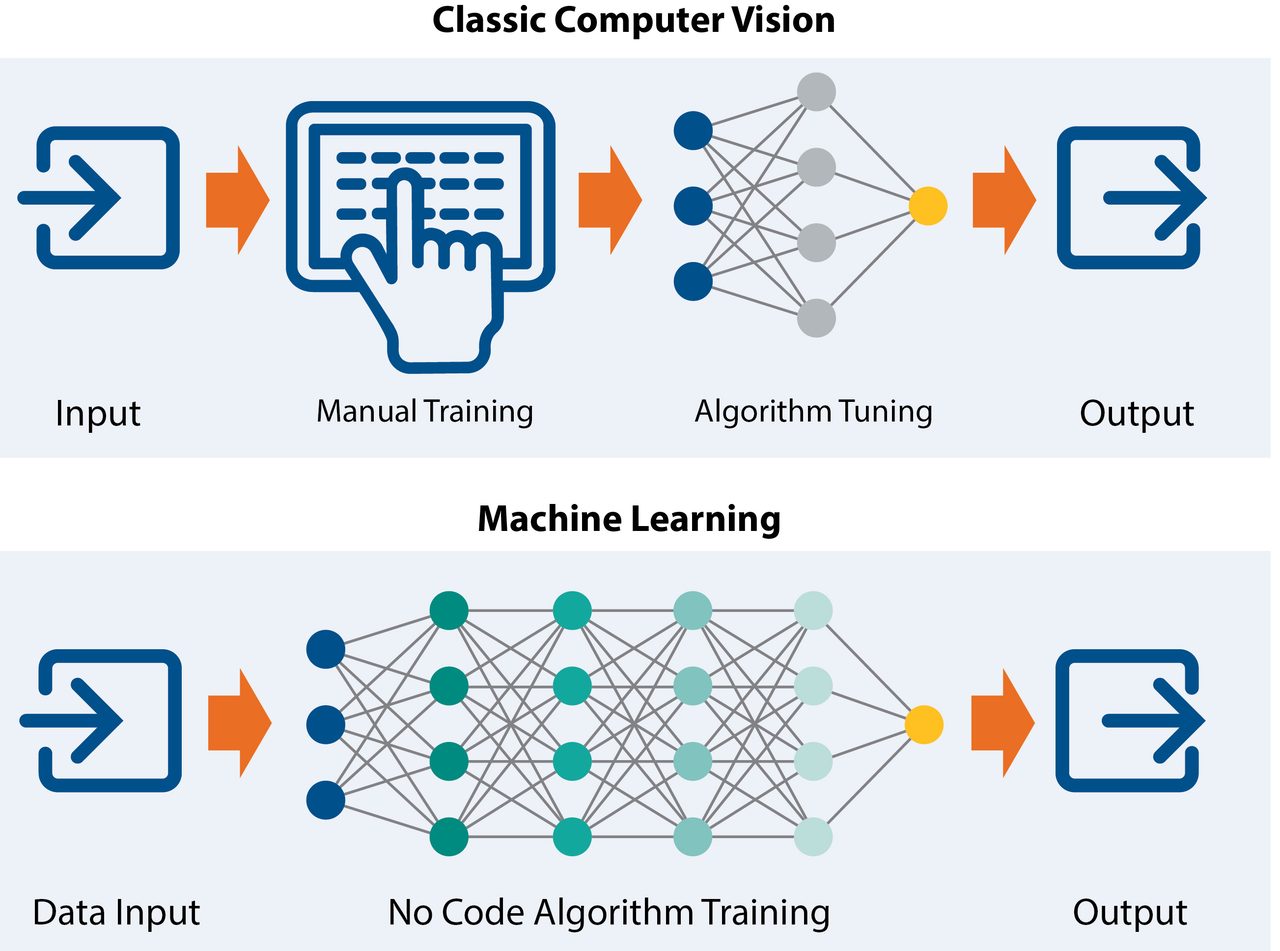

Broadly defined, AI is a machine solving a problem in a way that we consider intelligent. Machine learning is a branch of AI where computers are provided with a set of data to learn how to solve a specific problem. Instead of recoding a hard-wired algorithm, the AI system can use good and bad images to essentially retrain itself. It’s important to note that humans are still involved in AI; we need to provide the initial data so that the AI algorithm can learn from that data.

Deep learning then expands machine learning methods to powerful neural networks that are inspired by the way our brains function. With training and labelling, a user teaches the system how to make a decision based on good or bad outcomes. The neural network then starts analyzing the data and making its own decision.

Image 1: The branches of AI lead to machine-based decision making.

“No Code” Algorithms and Edge Processing

For most manufacturers evaluating AI, their key concerns focus on the cost and complexity of design and deployment.

There is a misconception that AI algorithm training and development is complicated, with added costs to bring on new staff or external expertise. “No code” algorithm design breaks those notions. New ‘drag-and-drop’ development platforms mean anyone can develop, train, and test an AI algorithm in a few hours. Advanced users can leverage these platforms to mix AI and machine vision algorithms, or customize widely available open source models. In addition, plug-ins for common inspection tasks provide a framework that is easily trained on a user’s unique data to build a custom algorithm.

In tandem to advances that simplify algorithm development, the power, performance, and cost improvements of embedded technologies make it much easier to add advanced capabilities to inspection applications.

In a retrofit upgrade, edge processing solutions can intercept the existing video feed and apply an AI skill. In these applications, high-performance and low-power edge platforms allow manufacturers to add advanced AI inspection skills without disrupting inline infrastructure and processes. In manual inspection, new edge processing-based systems let human operators leverage AI capabilities to automate processes and help inform decisions.

Edge processing is also changing where decision-making can happen. Traditional machine vision systems rely on networked devices sending data to centralized processing. Embedded vision takes centralized processing power and places it at points where local decisions need to be made. For complex robotics and industrial internet of things (IIoT) systems applications, the combination of small form factor smart embedded sensors and AI enable automated decision-making at different points in a networked system.

Hybrid AI and Inline Inspection

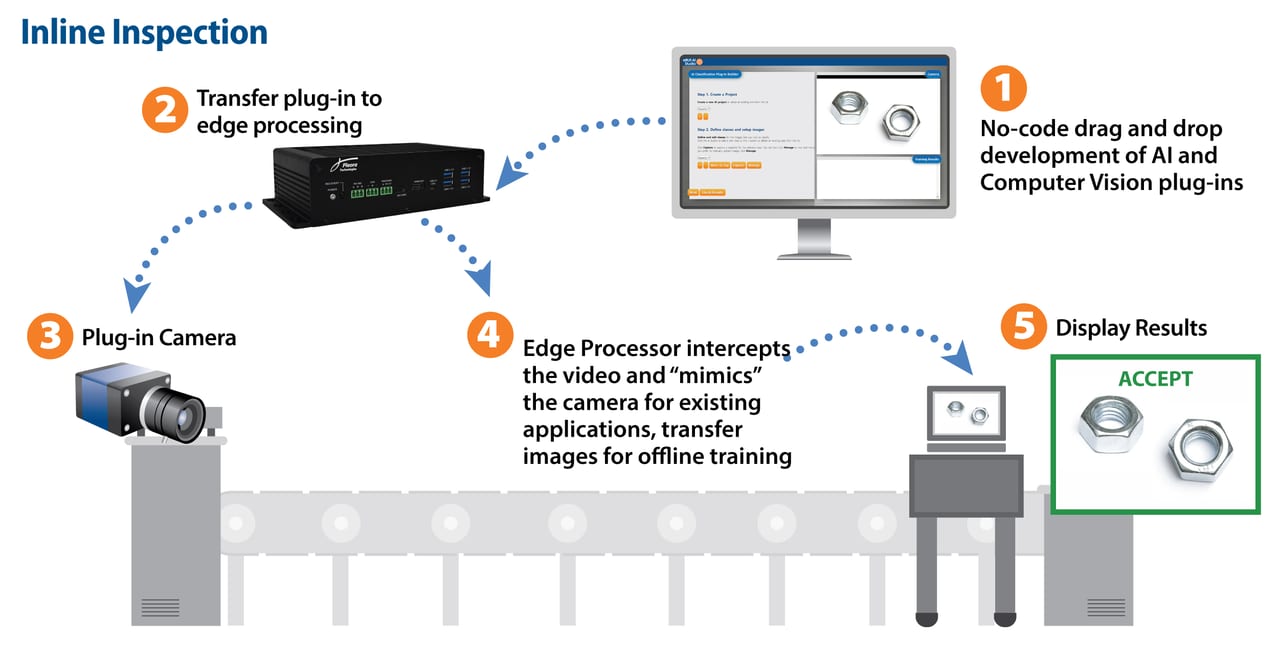

Hybrid AI merges the best of rules-based machine vision with more flexible AI capabilities to help improve accuracy in existing systems, without adding deployment complexities and infrastructure costs.

Leveraging embedding processing and a hybrid architecture, AI can be deployed alongside existing traditional machine vision inspection systems. In this deployment strategy, the embedded device acts as an intermediate device between the camera and host PC.

The embedded device ‘mimics’ the camera for existing applications and automatically acquires the images and applies the AI algorithm on top of the camera feed. Processed data is then sent over GigE Vision to the inspection application, which receives it as if it were still connected directly to the camera. This allows the re-use of existing cameras, controllers and end-user applications. The embedded device can be programmed to save incoming images for continuous offline training to improve inspection results.

Hybrid AI is well-suited for manufacturers already invested in machine vision quality inspection. Layering AI capabilities on top of existing machine vision processes reduces false-positives, where even a few percentage points of improvement significantly reduces product waste, lowers costs related to secondary screenings, and increases production uptime. AI is also advantageous for custom ‘short-run’ inspection requirements, where products have different threshold requirements.

Image 2: Classic computer vision requires human input and algorithm fine-tuning to meet different requirements. In comparison, “no code” AI software training packages simplify algorithm development and deployment of more flexible, adaptable inspection capabilities.

AI and Manual Inspection

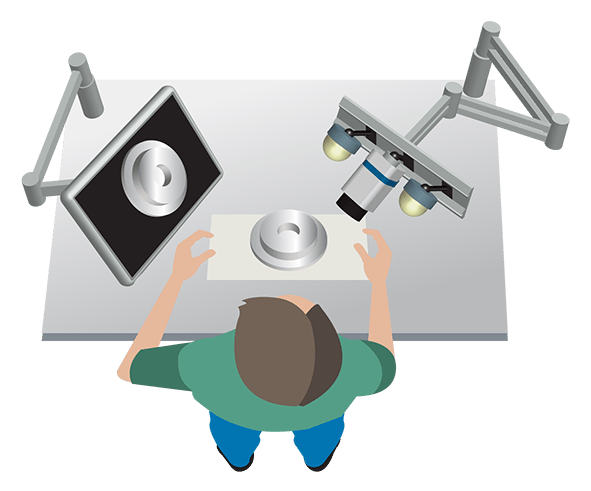

An emerging area for AI is automating or adding decision-support for manual inspection, where manufacturers report errors rates as high as 30 percent. Humans are well-suited for inspection tasks, but when we get tired or distracted AI is a powerful tool to help alert us of issues and guide our decisions. Automating manual inspection speeds inspection rates, improves end-to-end product quality, and provides more qualitative product evaluation to ensure manufacturing processes are repeatable and traceable.

New systems for manual inspection leverage no-code algorithm design and edge processing deployment, together with cameras, lighting, and display software. The plug-and-play system is easily trained on a manufacturer’s unique data to implement end-to-end quality checks and workflows, without requiring software coding skills. An intuitive user display guides operators through the manufacturing process and automatically identifies and visually highlights differences and deviations.

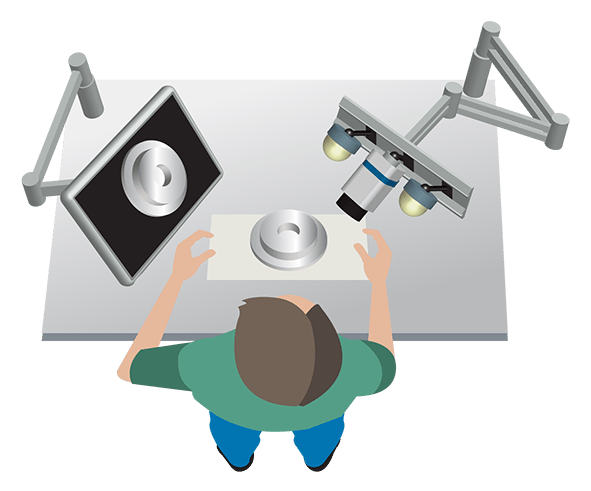

Image 3: The combination of easy-to-program AI algorithms and edge processing enables turnkey inspection systems to automate manual inspection.

AI, Industry 4.0, and the Cloud

The cloud is a very consistent topic of discussion, and confusion, for organizations considering more comprehensive monitoring and full factory analytics. Quality inspection drives a significant amount of data that can provide further insight into process and workflow efficiencies, machine health, and more. As a first step, the cloud could become an important repository for analytics data so manufacturers can evaluate processes and track product faults. Organizations are also evaluating the cloud as a way to share algorithms across global manufacturing sites to ensure consistent product quality and collaboration.

AI Doesn’t need to be Complex

Despite new terms and technologies, in the end AI is a tool that manufacturers can use to solve costly, time-consuming, and brand-damaging mistakes. With a hybrid approach, simplified training, and new edge processing technologies, it can be relatively straightforward to add AI to existing inspection applications or start automating manual tasks.

Opening Video Background Source: jitendrajadhav/Creatas Video via Getty Images.

Image Source: Pleora

Ed Goffin (edg.goffin@pleora.com) is marketing manager with Pleora.

Scroll Down

Scroll Down