CT for Dimensional Precision Measurement Reaches Production Floors

Industrial Computed Tomography

Industrial CT

Industrial CT

Industrial Computed Tomography

Headline

In the use of CT as a measuring instrument, the aerospace, automotive and medical technology industries are pioneers.

By Florian Knigge

Over the past few years, industrial computed tomography has consistently been developed further in terms of speed, degree of automation and accuracy. Due to its ability to nondestructively capture, display and analyze the internal structures of objects in high resolution and three-dimensionally, it is gaining importance as a precise 3D measuring technology for production in addition to the classic application fields of research and development and failure analysis.

Significantly shorter scanning and evaluation times, automated positioning and calibration, significantly higher and reproducible measurement accuracy. Industrial computed tomography has made considerable progress in recent years. As a result, the technology, which has long been established in research and quality laboratories, has now also reached the production floors. The volume data generated is no longer used only for standard nondestructive testing - i.e. the search for defects such as cracks or pores - but increasingly also for the measurement and imaging of complex components and tools. This is because CT-scanner systems can be used to quickly and easily capture internal geometries, cavities and undercuts, where optical or tactile coordinate measuring machines often require destruction or expensive individual fixtures and a lot of time to produce a result.

In the use of CT as a measuring instrument, the aerospace, automotive and medical technology industries are pioneers. Typical components measured include plug-in connectors, multi-material assemblies, injection nozzles, turbine blades, and implants. The increasing use of additive manufacturing methods also leads to components with interior geometries that prior to CT were unattainably complex and inaccessible to other measuring methods. In these cases, CT helps optimize and shorten development and initial sampling processes as well as production ramp-up. It can also be used for quality control and process optimization during production.

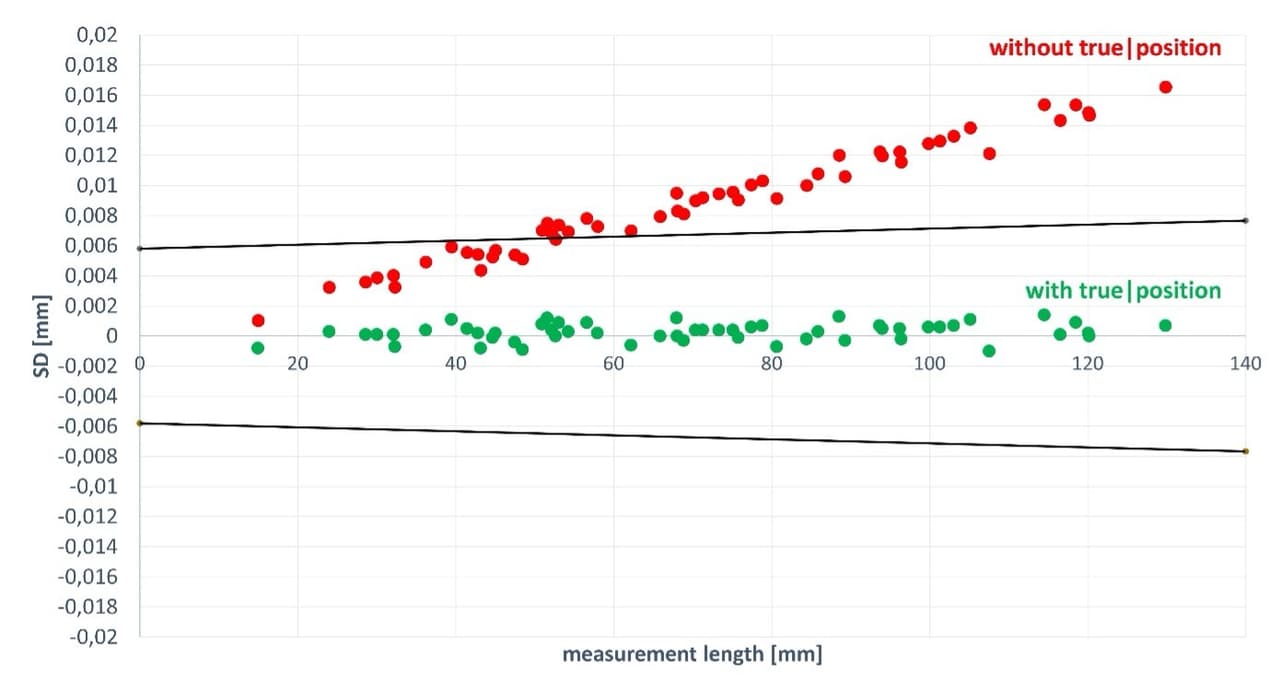

The True|position technology of Waygate Technologies extends the specified accuracy to all measuring positions.

Complete geometry acquisition and high information density

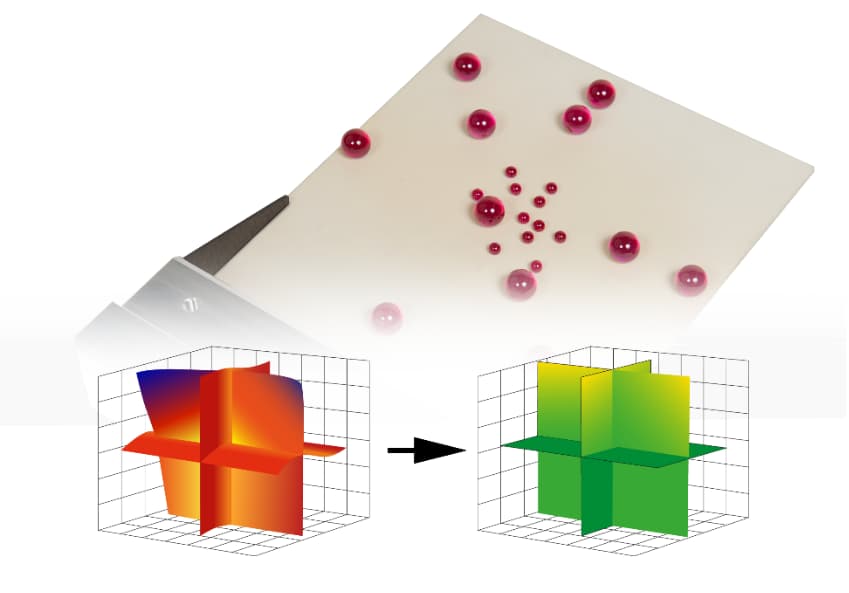

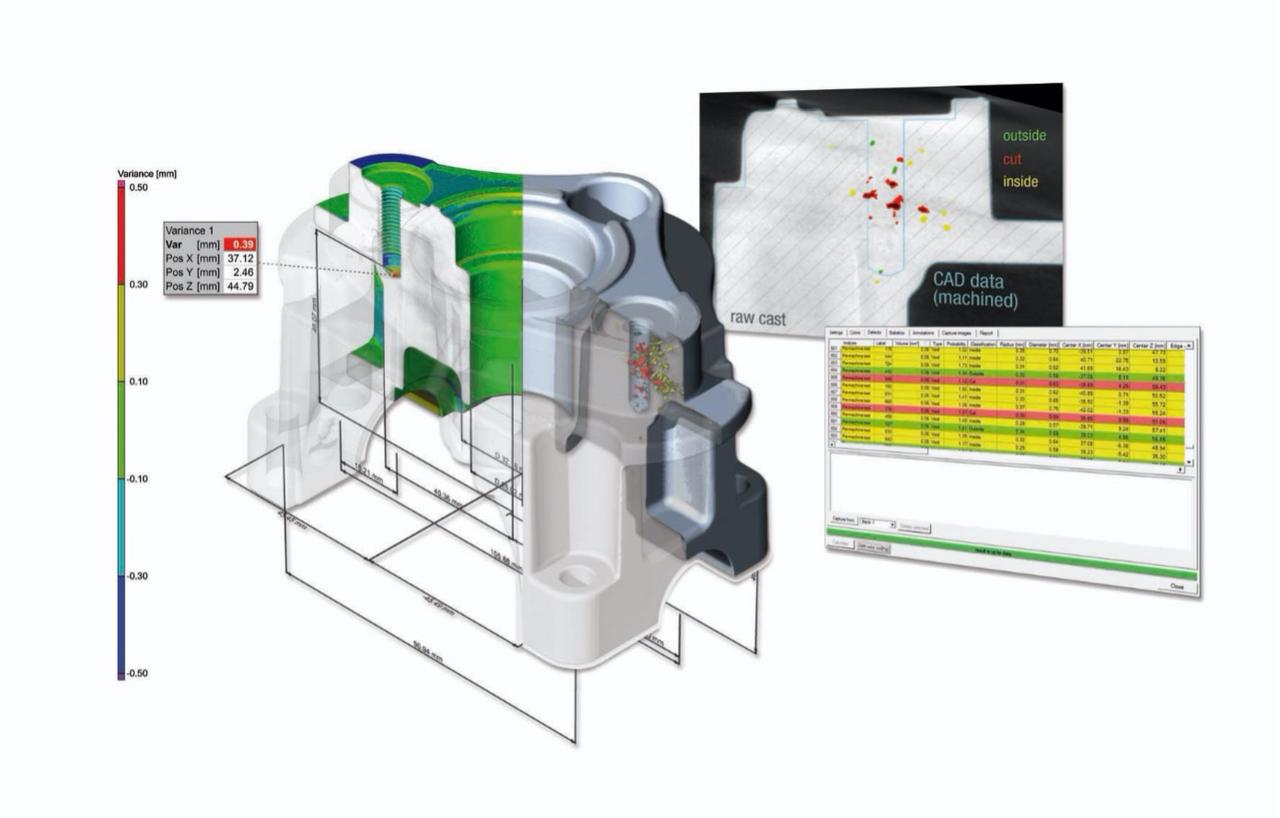

With the possibility to fully capture the geometry of a sample three-dimensionally, with high information density and all inspection characteristics in only one single CT scan and, inter alia, to create any desired virtual sections, CT technology opens up new analysis and time-saving possibilities in quality control. An automatic porosity analysis, for example, can display the size of inclusions distributed throughout the component in tabular or colored form. This allows conclusions to be drawn about the quality of the injection process or the stability of the workpiece.

The figure illustrates additional possible applications of CT such as the traditional dimensioning of standard geometries and profiles including all known form and position tolerances. A nominal/actual comparison displays the geometric deviations of a sample on an easily interpretable color scale. In this case, a comparison with the CAD data of the workpiece also shows that the largest detected pore clusters are located in areas that will be removed anyway in the course of further machining.

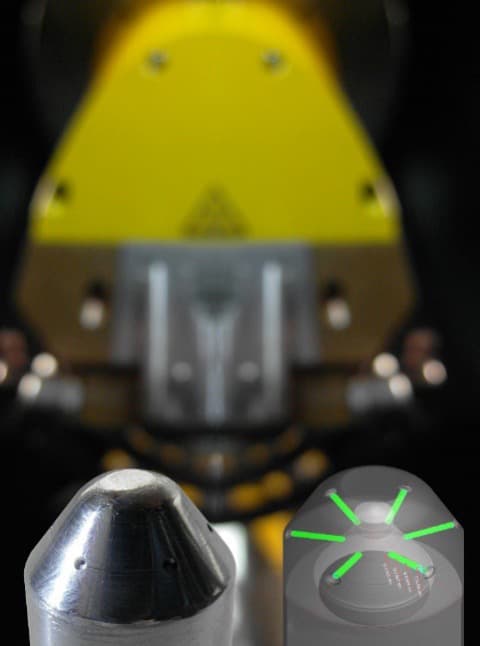

In combination with True|position the Ruby|plate calibration body allows an extremely accurate geometry correction

The faster and easier capture of even hidden internal geometries makes industrial CT also interesting for dimensional measurements on the production floor.

How a CT system becomes a 3D measuring instrument

In order for a CT system to be used for precision measurement tasks, calibrations by the manufacturer are inevitable. These guarantee that the system reaches and maintains the specified measuring accuracy, which is in the range of a few µm for microfocus systems. To ensure that temperature fluctuations do not influence the measurement, the system is kept at a defined temperature in a climate chamber for several days. Only then does the calibration of central system components such as the flat panel detector, the manipulator and the X-ray tube begin.

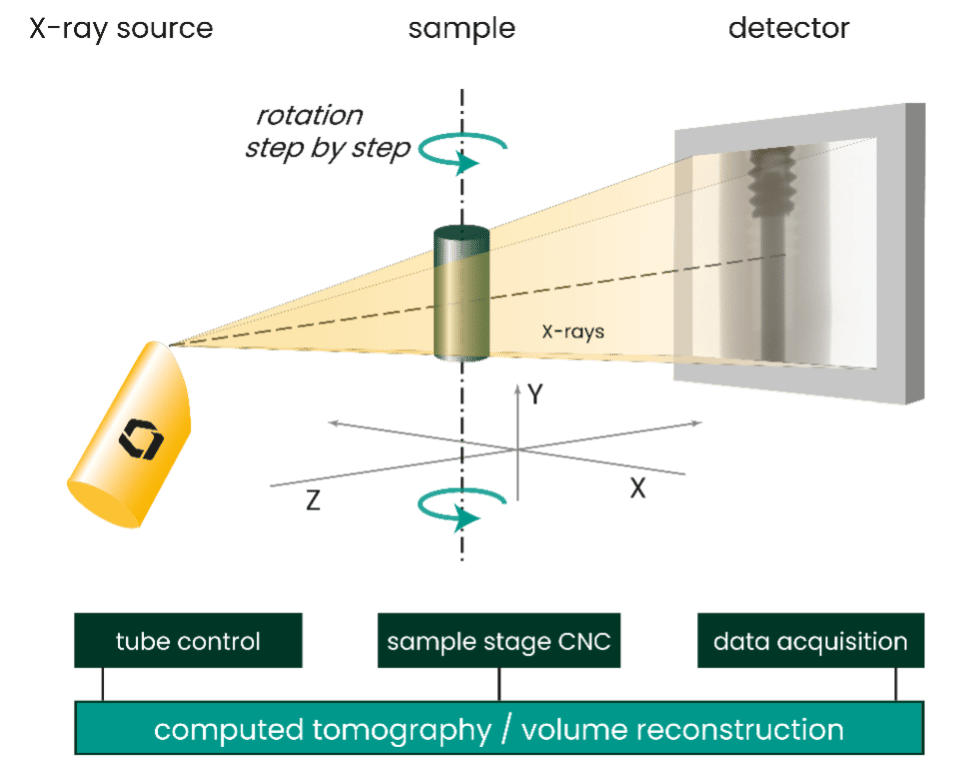

The actual physical measurement in CT systems consists of taking a series of 2D X-ray projection images. For this purpose, the test object is positioned on a very precise manipulation system and rotated once by 360° during the measurement with the help of a precision rotary axis. The 2D projection images are typically acquired in angle steps of <0.5°. Especially the sharpness of the X-ray images, which depends largely on the quality of the X-ray source and detector, but also the precision and stability of the system’s geometry, determine the quality of the raw data in this process.

The volume data set of the test specimen is then generated from the raw data by a numerical reconstruction using typically a filtered back projection algorithm. For an optimal measurement result, the reconstruction algorithm must take into account the geometric measurements of the system components previously determined in the calibration by the manufacturer, and correct physical effects like the so-called beam hardening or thermal expansion.

Once you have a complete volume data set of the test specimen, the surface data is extracted and exported into the 3D evaluation software to perform the final steps of the measuring task. These could be e.g. a nominal/actual comparison of surface data and CAD model with variance analyses and wall thickness measurements or measurements using the fitting of standard geometries and profiles for classic GD&T tasks.

The automatic porosity analysis highlights inclusions in tabular or colored form after just a single scan.

Improved process chain for constant precision according to VDI2630

Until just a few years ago, there was hardly any way to compare the metrology performance of individual high-resolution CT systems. The VDI guideline 2630, introduced and declared binding for all manufacturers only a decade ago, first defined an industrial standard for determining measurement accuracy, and thus for determining the suitability of CT systems for inspection processes.

Good measurement accuracy results from the process chain for 3D measurement with CT systems described above. This is because the quality of the measured raw data (CT projection data) is essential for the accuracy of all subsequent evaluations. In addition to a stable system design optimized for the respective application, the right combination of hardware and software tools to minimize geometric deviations is key to successful precision measurement with computed tomography.

For a long time, customers shied away from the time-consuming positioning and initial calibration as well as the regularly required re-calibration of the system, both of which are necessary for the successful use of CT as a high-precision measuring instrument. Customers considered them an obstacle that was reason enough not to acquire a CT system for this application, especially in a production-related environment. But here, too, some CT devices have been advanced in terms of handling and the associated effort and expense.

Set-up of a CT system for dimensional precision measurement

Recent developments of advanced CT systems for metrology

Technological improvements especially in the most advanced industrial CT systems are now ensuring the consistent delivery of the required precision with fast scans and high sample throughput. For instance, a new powerful 300kV microfocus CT system for 3D metrology and defect analysis like the Phoenix V|tome|x combines a variety of proprietary CT innovations for reproducible precision measurements in a comparatively short time.

This kind of new technology allows to automatically remove scatter artifacts in just a few minutes and achieves a significantly higher image quality, especially with highly absorbent samples, which could otherwise only be achieved with significantly slower line tomography, taking about one hour or require the investment in more expensive 450kV high-energy CT equipment.

User-friendly solutions for fully automated scans

CT scanning software can include all necessary processing functions for control and calibration of the tomography system, acquisition of projection data, fast and optimized reconstruction of volumes, generation of geometrically correct surface data of the scanned object, and the execution of measurements with suitable software packages - all highly intuitive and if required fully automated at the push of a button in selected cases. These core functions can be complemented by precisely tailored automation solutions for sample and filter changes.

Quick and uncomplicated calibration

In order to ensure the specified measuring accuracy of the overall system, regular re-calibration, as with any other metrological technology, is essential.

Due to the very high degree of automation in running calibration and validation precision level, the significantly shorter scanning times and extended evaluation options have made CT technology a real alternative for precision measurements in production environments.

All images copyright ‘Waygate Technologies’

Florian Knigge is the Technical Director CT Metrology at Waygate Technologies, a Baker Hughes business (formerly GE Inspection Technologies)

For more information, email Florian.Knigge@bakerhughes.com or visit www.waygate-tech.com.

Scroll Down

Scroll Down